Sports teams win with a range of skills and strengths. A hockey side can’t win if everyone’s playing goalie. The team also needs a center and wings to advance the puck and score goals, as well as defensive players to block the opposing team’s shots.

The same is true for artificial intelligence systems. Like a hockey team with players in different positions, an AI system with both a GPU and CPU is a necessary and winning combo.

This mix of processors can bring you and your customers both the lower cost and greater energy efficiency of a CPU and the parallel processing power of a GPU. With this team approach, your customers should be able to handle any AI training and inference workloads that come their way.

In the beginning

One issue: Neither CPUs nor GPUs were originally designed for AI. In fact, both designs predate AI by many years. Their origins still define how they’re best used, even for AI.

GPUs were initially designed for computer graphics, virtual reality and video. Getting pixels to the screen is a task where high levels of parallelization speed things up. And GPUs are good at parallel processing. This has allowed them to be adapted for HPC and AI workloads, which analyze and learn from large volumes of data. What’s more, GPUs are often used to run HPC and AI workloads simultaneously.

GPUs are also relatively expensive. For example, Nvidia’s new H100 has an estimated retail price of around $25,000 per GPU. Your customers may incur additional costs from cooling—GPUs generate a lot of heat. GPUs also use a lot of power, which can further raise your customer’s operating costs.

CPUs, by contrast, were originally designed to handle general-purpose computing. A modern CPU can run just about any type of calculation, thanks to its encompassing instruction set.

A CPU processes data sequentially, rather than in parallel, and that’s good for linear and complex calculations. Compared with GPUs, a comparable CPU generally is less expensive, needs less power and runs cooler.

In today’s cost-conscious environment, every data center manager is trying to get the most performance per dollar. Even a high-performing CPU has a cost advantage over comparable GPUs that can be extremely important for your customers.

Team players

Just as a hockey team doesn’t rely on its goalie to score points, smart AI practitioners know they can’t rely on their GPUs to do all types of processing. For some jobs, CPUs are still better.

Due to a CPU’s larger memory capacity, they’re ideal for machine learning training and inference, as long as the scale is relatively small. CPUs are also good for training small neural networks, data preparation and feature extraction.

CPUs offer other advantages, too. They’re generally less expensive than GPUs. In today’s cost-conscious environment, where every data center manager is trying to get the most performance per dollar, that’s extremely important. CPUs also run cooler than GPUs, requiring less (and less expensive) cooling.

GPUs excel in two main areas of AI: machine learning and deep learning (ML/DL). Both involve the analysis of gigabytes—or even terabytes—of data for image and video processing. For these jobs, the parallel processing capability of a GPU is a perfect match.

AI developers can also leverage a GPU’s parallel compute engines. They can do this by instructing the processor to partition complex problems into smaller, more manageable sub-problems. Then they can use libraries that are specially tuned to take advantage of high levels of parallelism.

Theory into practice

That’s the theory. Now let’s look at how some leading AI tech providers are putting the team approach of CPUs and GPUs into practice.

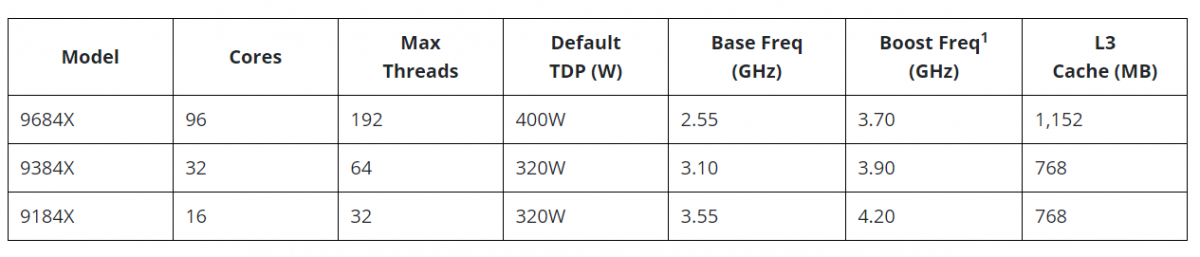

Supermicro offers its Universal GPU Systems, which combine Nvidia GPUs with CPUs from AMD, including the AMD EPYC 9004 Series.

An example is Supermicro’s H13 GPU server, with one model being the AS 8215GS-TNHR. It packs an Nvidia HGX H100 multi-GPU board, dual-socket AMD EPYC 9004 series CPU, and up to 6TB of DDR5 DRAM memory.

For truly large-scale AI projects, Supermicro offers SuperBlade systems designed for distributed, midrange AI and ML training. Large AI and ML workloads can require coordination among multiple independent servers, and the Supermicro SuperBlades are designed to do just that. Supermicro also offers rack-scale, plug-and-play AI solutions powered by the company’s GPUs and turbocharged with liquid cooling.

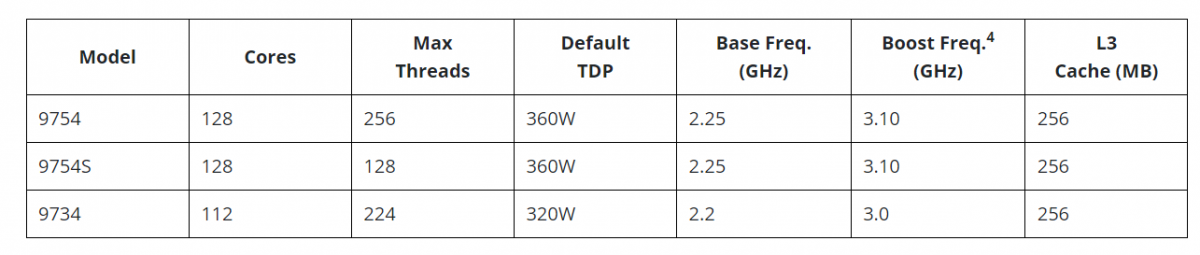

The Supermicro SuperBlade is available with a single AMD EYPC 7003/7002 series processors with up to 64 cores. You also get AMD 3D V-Cache, up to 2TB of system memory per node, and a 200Gbps InfiniBand HDR switch. Within a single 8U enclosure, you can install up to 20 blades.

Looking ahead, AMD plans to soon ship its Instinct MI300A, an integrated data-center accelerator that combines three key components: AMD Zen 4 CPUs, AMD CDNA3 GPUs, and high-bandwidth memory (HBM) chiplets. This new system is designed specifically for HPC and AI workloads.

Also, the AMD Instinct MI300A’s high data throughput lets the CPU and GPU work on the same data in memory simultaneously. AMD says this CPU-GPU partnership will help users save power, boost performance and simplify programming.

Truly, a team effort.

Do more: