You couldn't call 2024 boring.

If anything, the year was almost too exciting, too packed with important events, and moving much too fast.

Looking back, a handful of 2024’s technology events stand out. Here are a few of our favorite things.

AI Everywhere

In March AMD’s chief technology officer, Mark Papermaster, made some startling predictions that turned out to be absolutely true.

Speaking at an investors’ event sponsored by Arete Research, Papermaster said, “We’re thrilled to bring AI across our entire product portfolio.” AMD has indeed done that, offering AI capabilities from PCs to servers to high-performance GPU accelerators.

Papermaster also said the buildout of AI is an event as big as the launch of the internet. That certainly sounds right.

He also said AMD believes the total addressable market for AI through 2027 to be $400 billion. If anything, that was too conservative. More recently, consultants Bain & Co. predicted that figure will reach $780 billion to $990 billion.

Back in March, Papermaster said AMD had increased its projection for full-year AI sales from $2 billion to $3.5 billion. That’s probably too low, too.

AMD recently reported revenue of $3.5 billion for its data-center group for just the third quarter alone. The company attributed at least some of the group’s 122% year-on-year increase to the strong ramp of AMD Instinct GPU shipments.

5th Gen AMD EPYC Processors

October saw AMD introduce the fifth generation of its powerful line of EPYC server processors.

The 5th Gen AMD EPYC processors use the company’s new ‘Zen 5’ core architecture. It includes over 25 SKUs offering anywhere from 8 to 192 cores. And the line includes a model—the AMD EPYC 9575F—designed specifically to work with GPU-powered AI solutions.

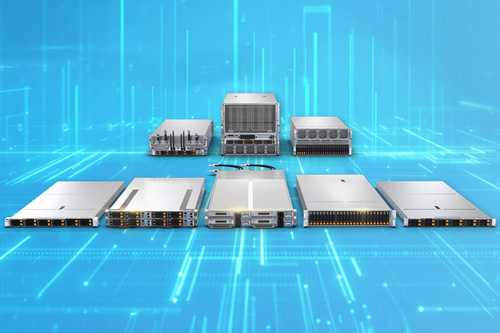

The market has taken notice. During the October event, AMD CEO Lisa Su told the audience that nearly one in three servers worldwide (34%) are now powered by AMD EPYC processors. And Supermicro launched its new H14 line of servers that will use the new EPYC processors.

Supermicro Liquid Cooling

As servers gain power to add AI and other compute-intensive capabilities, they also run hotter. For data-center operators, that presents multiple challenges. One big one is cost: air conditioning is expensive. What’s more, AC may be unable to cool the new generation of servers.

Supermicro has a solution: liquid cooling. For some time, the company has offered liquid cooling as a data-center option.

In November the company took a new step in this direction. It announced a server that comes with liquid cooling only.

The server in question is the Supermicro 2U 4-node FlexTwin, model number AS -2126FT-HE-LCC. It’s a high-performance, hot-swappable, high-density compute system designed for HPC workloads.

Each 2U system comprises 4 nodes, and each node is powered by dual AMD EPYC 9005 processors. (The previous-gen AMD EPYC 9004s are supported, too.)

To keep cool, the FlexTwin server uses a direct-to-chip (D2C) cold plate liquid cooling setup. Each system also runs 16 counter-rotating fans. Supermicro says this cooling arrangement can remove up to 90% of server-generated heat.

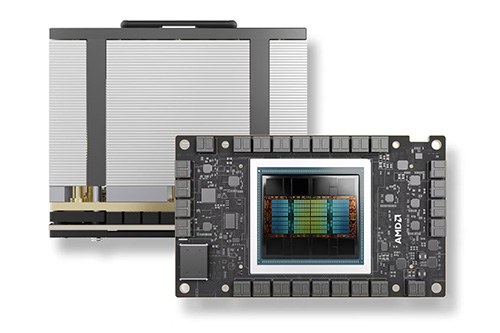

AMD Instinct MI325X Accelerator

A big piece of AMD’s product portfolio for AI is its Instinct line of accelerators. This year the company promised to maintain a yearly cadence of new Instinct models.

Sure enough, in October the company introduced the AMD Instinct MI325X Accelerator. It’s designed for Generative AI performance and working with large language models (LLMs). The system offers 256GB of HBM3E memory and up to 6TB/sec. of memory bandwidth.

Looking ahead, AMD expects to formally introduce the line’s next member, the AMD Instinct MI350, in the second half of next year. AMD has said the new accelerator will be powered by a new AMD CDNA 4 architecture, and will improve AI inferencing performance by up to 35x compared with the older Instinct MI300.

Supermicro Edge Server

A lot of computing now happens at the edge, far beyond either the office or corporate data center.

Even more edge computing is on tap. Market watcher IDC predicts double-digit growth in edge-computing spending through 2028, when it believes worldwide sales will hit $378 billion.

Supermicro is on it. At the 2024 MWC, held in February in Barcelona, the company introduced an edge server designed for the kind of edge data centers run by telcos.

Known officially as the Supermicro A+ Server AS -1115SV-WTNRT, it’s a 1U short-depth server powered by a single AMD EPYC 8004 processor with up to 64 cores. That’s edgy.

Happy Holidays from all of us at Performance Intensive Computing. We look forward to serving you in 2025.

Check out these related blog posts: