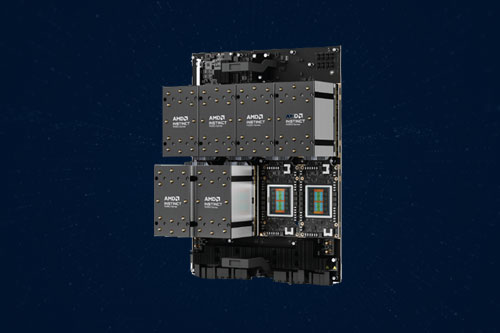

Supermicro didn’t waste any time supporting AMD’s new Instinct MI350 Series GPUs. The same day AMD formally introduced the new GPUs, Supermicro announced two rack-mount servers that support them.

The new servers, members of Supermicro’s H14 generation of GPU optimized solutions, feature dual AMD EPYC 9005 CPUs along with the AMD Instinct MI350 series GPUs. They’re aimed at organizations looking to achieve a formerly tough combination: maximum performance at scale in their AI-driven data centers, but also a lower total cost of ownership (TCO).

To make the new servers easy to upgrade and scale, Supermicro has designed the new servers around its proven building-block architecture.

Here’s a quick look at the two new Supermicro servers:

4U liquid-cooled system with AMD Instinct MI355X GPU

This system, model number AS -4126GS-NMR-LCC, comes with a choice of dual AMD EPYC 9005 or 9004 Series CPUs, both with liquid cooling.

On the GPU front, users also have a choice of the AMD Instinct MI325X or brand-new AMD Instinct MI355X. Either way, this server can handle up to 8 GPUs.

Liquid cooling is provided by a single direct-to-chip cold plate. Further cooling comes from 5 heavy-duty fans and an air shroud.

8U air-cooled system with AMD Instinct MI350X GPU

This system, model number AS -8126GS-TNMR, comes with a choice of dual AMD EPYC 9005 or 9004 Series CPUs, both with air cooling.

This system also supports both the AMD Instinct MI325X and AMD Instinct MI350X GPUs. Also like the 4U server, this system supports up to 8 GPUs.

Air cooling is provided by 10 heavy-duty fans and an air shroud.

The two systems also share some features in common. These include PCIe 5.0 connectivity, large memory capacities (up to 2.3TB), and support for both AMD’s ROCm open-source software and AMD Infinity Fabric Link connections for GPUs.

“Supermicro continues to lead the industry with the most experience in delivering high-performance systems designed for AI and HPC applications,” says Charles Liang, president and CEO of Supermicro. “The addition of the new AMD Instinct MI350 series GPUs to our GPU server lineup strengthens and expands our industry-leading AI solutions and gives customers greater choice and better performance as they design and build the next generation of data centers.”

Do More:

- Learn more about the new Supermicro AI solutions.

- Get tech specs:

- Read related blog post: AMD presents its vision for the AI future.