AMD this week introduced the AMD EPYC 4005 series processors. These are purpose-built CPUs designed to bring enterprise-level features and performance to small and medium businesses.

And Supermicro, wasting no time, also announced that several of its servers are now shipping with the new AMD EPYC 4005 CPUs.

EPYC 4005

The new AMD EPYC 4005 series processors are intended for on-prem users and cloud service providers who need powerful but cost-effective solutions in a 3U height form factor.

Target customers include SMBs, departmental and branch-office server users, and hosted IT service providers. Typical workloads for servers powered by the new CPUs will include general-purpose computing, dedicated hosting, code development, retail edge deployments, and content creation, AMD says.

“We’re delivering the right balance of performance, simplicity, and affordability,” says Derek Dicker, AMD’s corporate VP of enterprise and HPC. “That gives our customers and system partners the ability to deploy enterprise-class solutions that solve everyday business challenges.”

The new processors feature AMD’s ‘Zen 5’ core architecture and come in a single-socket package. Depending on model, they offer anywhere from 6 to 16 cores; up to 192GB of dual-channel DDR5 memory; 28 lanes of PCIe Gen 5 connectivity; and boosted performance of up to 5.7 GHz. One model of the AMD EPYC 4005 line also includes integrated AMD 3D V-Cache tech for a larger 128MB L3 cache and lower latency.

On a standard 42U rack, servers powered by AMD EPYC 4005 can provide up to 2,080 cores (that’s 13 3U servers x 10 nodes/server x 16 cores/node). That level of capacity can reduce a user’s size requirements while also lowering their TCO.

The new AMD CPUs follow the AMD EPYC 4004 series, introduced this time last year. The EPYC 4004 processors, still available from AMD, use the same AM5 socket as the 4005s.

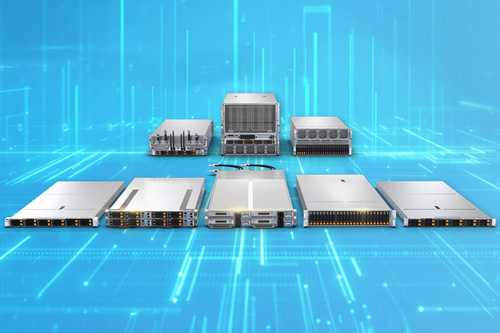

Supermicro Servers

Also this week, Supermicro announced that several of its servers are now shipping with the new AMD EPYC 4005 series processors. Supermicro also introduced a new MicroCloud 3U server that’s available in 10-node and 5-node versions, both powered by the AMD EPYC 4005 CPUs.

"Supermicro continues to deliver first-to-market innovative rack-scale solutions for a wide range of use cases,” says Mory Lin, Supermicro’s VP of IoT, embedded and edge computing.

Like the AMD EPYC 4005 CPUs, the Supermicro servers are intended for SMBs, departmental and branch offices, and hosted IT service providers.

The new Supermicro MicroCloud 10-node server features single-socket AMD processors (your choice of either 4004 or the new 4005) as well as support for one single-width GPU accelerator card.

Supermicro’s new 5-node MicroCloud server also offers a choice of AMD EPYC 4004 or 4005 series processor. In contrast to the 10-node server, the 5-node version supports one double-width GPU accelerator card.

Supermicro has also added support for the new AMD EPYC 4005 series processors to several of its existing server lines. These servers include 1U, 2U and tower servers.

Have SMB, branch or hosting customers looking for affordable compute power? Tell them to:

- Explore the AMD EPYC 4005 series processors

- Meet Supermicro servers now powered by AMD EPYC 4005 series processors