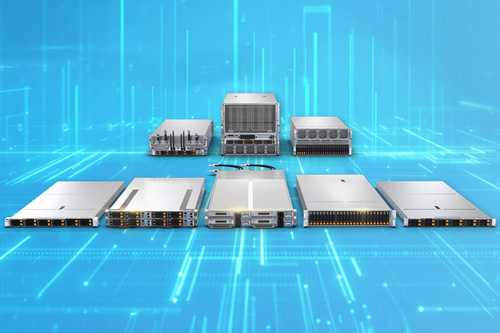

Wondering about the server of the future? It’s available for order now from Supermicro.

The company recently added support for the latest 5th Gen AMD EPYC 9005 Series processors on its 2U 4-node FlexTwin server with liquid cooling.

This server is part of Supermicro’s H14 line and bears the model number AS -2126FT-HE-LCC. It’s a high-performance, hot-swappable and high-density compute system.

Intended users include oil & gas companies, climate and weather modelers, manufacturers, scientific researchers and research labs. In short, anyone who requires high-performance computing (HPC).

Each 2U system comprises four nodes. And each node, in turn, is powered by a pair of 5th Gen AMD EPYC 9005 processors. (The previous-gen AMD EPYC 9004 processors are supported, too.)

Memory on this Supermicro FlexTwin maxes out at 9TB of DDR5, courtesy of up to 24 DIMM slots. Expansions connect via PCIe 5.0, with one slot per node the standard and more available as an option.

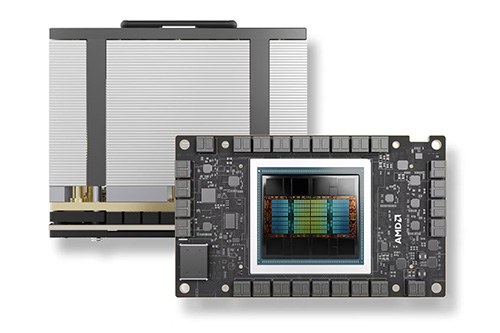

The 5th Gen AMD EPYC processors, introduced last month, are designed for data center, AI and cloud customers. The series launched with over 25 SKUs offering up to 192 cores and all using AMD’s new “Zen 5” or “Zen 5c” architectures.

Keeping Cool

To keep things chill, the Supermicro FlexTwin server is available with liquid cooling only. This allows the server to be used for HPC, electronic design automation (EDA) and other demanding workloads.

More specifically, the FlexTwin server uses a direct-to-chip (D2C) cold plate liquid cooling setup, and each system also runs 16 counter-rotating fans. Supermicro says this cooling arrangement can remove up to 90% of server-generated heat.

The server’s liquid cooling also covers the 5th gen AMD processors’ more demanding cooling requirements; they’re rated at up to 500W of thermal design power (TDP). By comparison, some members of the previous, 4th gen AMD EPYC processors have a default TDP as low as 200W.

Build & Recycle

The Supermicro FlexTwin server also adheres to the company’s “Building Block Solutions” approach. Essentially, this means end users purchase these servers by the rack.

Supermicro says its Building Blocks let users optimize for their exact workload. Users also gain efficient upgrading and scaling.

Looking even further into the future, once these servers are ready for an upgrade, they can be recycled through the Supermicro recycling program.

In Europe, Supermicro follows the EU’s Waste Electrical and Electronic Equipment (WEEE) Directive. In the U.S., recycling is free in California; users in other states may have to pay a shipping charge.

Put it all together, and you’ve got a server of the future, available to order today.

Do More:

- Check out the tech specs: Supermicro 2U 4-node FlexTwin server with liquid cooling & 5th Gen AMD EPYC processors

- Explore 5th Gen AMD EPYC processors