Bringing AMD Instinct to the Forefront

In the constantly evolving landscape of AI and machine learning, the synergy between hardware and software is paramount. Enter AMD and Supermicro, two industry titans who have joined forces to empower organizations in the new world of AI with cutting-edge solutions. Their shared vision? To enable organizations to unlock the full potential of AI workloads, from training massive language models to accelerating complex simulations.

The AMD Instinct MI300 Series: Changing The AI Acceleration Paradigm

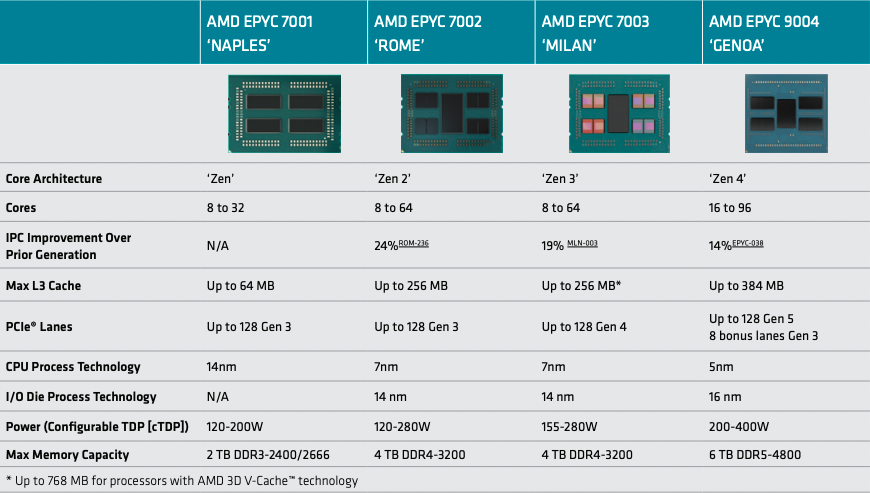

At the heart of this collaboration lies the AMD Instinct MI300 Series—a family of accelerators designed to redefine performance boundaries. These accelerators combine high-performance AMD EPYC™ 9004 series CPUs with the powerful AMD InstinctTM MI300X GPU accelerators and 192GB of HBM3 memory, creating a formidable force for AI, HPC, and technical computing.

Supermicro’s H13 Generation of GPU Servers

Supermicro’s H13 generation of GPU Servers serves as the canvas for this technological masterpiece. Optimized for leading-edge performance and efficiency, these servers integrate seamlessly with the AMD Instinct MI300 Series. Let’s explore the highlights:

8-GPU Systems for Large-Scale AI Training:

- Supermicro’s 8-GPU servers, equipped with the AMD Instinct MI300X OAM accelerator, offer raw acceleration power. The AMD Infinity Fabric™ Links enable up to 896GB/s of peak theoretical P2P I/O bandwidth, while the 1.5TB HBM3 GPU memory fuels large-scale AI models.

- These servers are ideal for LLM Inference and training language models with trillions of parameters, minimizing training time and inference latency, lowering the TCO and maximizing throughput.

Benchmarking Excellence

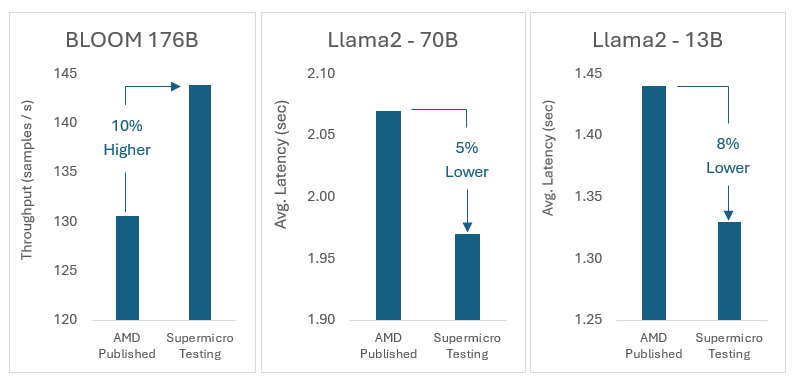

But what about real-world performance? Fear not! Supermicro’s ongoing testing and benchmarking efforts have yielded remarkable results. The continued engagement between AMD and Supermicro performance teams enabled Supermicro to test pre-release ROCm versions with the latest performance optimizations and publicly released optimization like Flash Attention 2 and vLLM. The Supermicro AMD-based system AS -8125GS-TNMR2 showcases AI inference prowess, especially on models like Llama-2 70B, Llama-2 13B, and Bloom 176B. The performance? Equal to or better than AMD’s published results from the Dec. 6 Advancing AI event.

Charles Liang’s Vision

In the words of Charles Liang, President and CEO of Supermicro:

“We are very excited to expand our rack scale Total IT Solutions for AI training with the latest generation of AMD Instinct accelerators. Our proven architecture allows for fully integrated liquid cooling solutions, giving customers a competitive advantage.”

Conclusion

The AMD-Supermicro partnership isn’t just about hardware and software stacks; it’s about pushing boundaries, accelerating breakthroughs, and shaping the future of AI. So, as we raise our virtual glasses, let’s toast to innovation, collaboration, and the relentless pursuit of performance and excellence.